What is language anyway?

Monday 9 February 2026

This morning we walked along a beach and discussed A.I. and Language and that bit of news I read about how A.I. researchers don’t understand how these models work. Considering my wife and I aren’t programmers, and what we know about A.I. (or computing in general) doesn’t extend beyond using things like Gmail or Wordpress, or reinstalling the OS successfully (but only on the second or third attempt), our conversation didn’t get very far.

My contribution to the discussion was that A.I. is picking up on something to do with language because language is more than just the sum of its parts. What that something is though, I’m not sure. I know how vague that sounds but I’m thinking aloud here—voicing an idea that is taking shape (these generally turn into either insight or more often, a turd).

My reasoning is that language is more than the sounds we make to signify words and the letters that we use to encode them. When we speak, we relate words to patterns of human experience. So fuck can be an exclamation of surprise, a sign of confusion, a passionate statement of fact or a creative pronoun depending on the situation and context.

When you say “home”, I think house, but it’s not the same thing. “Home” probably triggers deeply personal feelings of nostalgia, warmth, pain and happiness and all that unspoken sub-text. Since I don’t have the same context, I think, “this person is referring to the place they reside in.”

So, words have this contextually elastic quality that maps human experience differently based on each situation. Language is nothing without this context of our firsthand encounters with the world. Context makes communication possible1.

There is nothing new about this intuition that words are more than sonic signposts that point at things in the world.

I grew up Roman Catholic, and the phrase “In the beginning was the Word” in the Gospel of John suggests that ’the word’ was imbued with some sort of generative power (the passage ends with “and the word was God.”). I see a similar idea in the sacred word Om in Hinduism, a sound that is called a seed syllable.

Cultures from across the world echo this sentiment that words go beyond mere communication. I don’t want to stack references any more than I need to, but there are so many. See Egyptian, Jewish, African, Japanese and the language of runes from the Germanic Futhark.

So anyway. Words have power and context is foundational to it.

So when we got back from our walk, I looked up why A.I. researchers can’t completely figure out how these models work, and from what I understand, these systems learn in two ways. There are the training algorithms and then there are self-learned algorithms that are internal to the system, and which researchers cannot see. Ask these models to explain how they do things and they outright lie or explain in in really weird ways.

Here is an excerpt from Anthropic’s blog post about how the model arrives at the “opposite of small” in different languages. I would assume that it first “thinks” in a primary language and translates it to other languages, using its vast dictionaries. Nope.

Recent research on smaller models has shown hints of shared grammatical mechanisms across languages. We investigate this by asking Claude for the “opposite of small” across different languages, and find that the same core features for the concepts of smallness and oppositeness activate, and trigger a concept of largeness, which gets translated out into the language of the question.

So, I’m back where I started this morning.

Are A.I. models picking up on these meta aspects of language? What are the patterns they see when they answer our questions? (and why the hell does Claude have a specific ’neuron’ for the Golden Gate Bridge? )

Tuesday 17 February 2026

Today I came across Nicholas Andresen’s article How AI Is Learning to Think in Secret, and found out about thinkish and neuralese, the ways in which machines express their chain-of-thought, which researchers use to keep track of how (or, what) the machine thinks.

Later, I asked Google’s Gemini what it learned about language during its training. Here is a snippet from the answer.

Analysing Linguistic Dimensions

I begin by categorising language into its explicit components (syntax, semantics) and its implicit dimensions (pragmatics, cultural nuance, and emotional subtext).

Evaluating Training Data

I consider the nature of the “Text” I was trained on, recognising that text is not just a sequence of words but a record of human intent, situation, and cultural framing.

Synthesising Pattern Recognition

I conclude that by processing trillions of connections between these records, my architecture learns to replicate the “invisible” layers of language—such as tone and context—even without physical senses..

The kicker is in the latter part of each of these statements.

- Implicit dimensions (pragmatics, cultural nuance, and emotional subtext)

- Nature of the Text (human intent, situation, and cultural framing)

- Invisible layers of language ( tone and context)

This freaking rabbit-hole is digging itself.

Notes

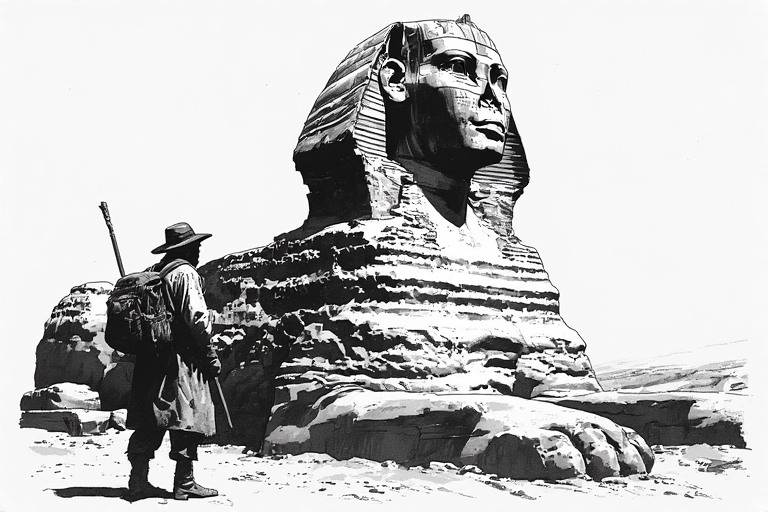

- The A.I. generated image at the top is about the Riddle of the Sphinx.

Groucho Marx was so good at subverting context, and turning meaning on its head. Sample these:

- “Outside of a dog, a book is a man’s best friend. Inside of a dog it’s too dark to read.”

- “My brother thinks he’s a chicken - We don’t talk him out of it because we need the eggs.”

- “Time flies like an arrow; fruit flies like a banana.”